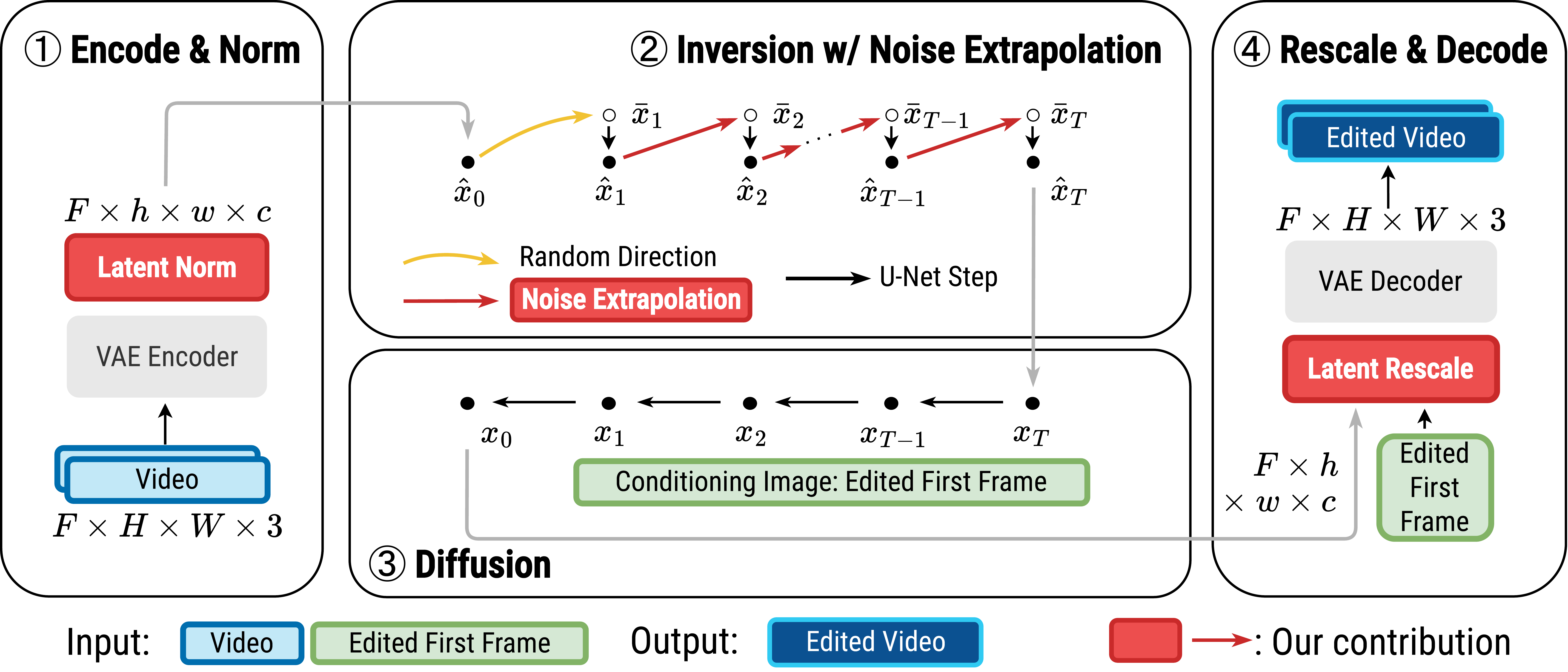

We introduce Videoshop, a training-free video editing algorithm for localized semantic edits. Videoshop allows users to use any editing software, including Photoshop and generative inpainting, to modify the first frame; it automatically propagates those changes, with semantic, spatial, and temporally consistent motion, to the remaining frames. Unlike existing methods that enable edits only through imprecise textual instructions, Videoshop allows users to add or remove objects, semantically change objects, insert stock photos into videos, etc. with fine-grained control over locations and appearance. We achieve this through image-based video editing by inverting latents with noise extrapolation, from which we generate videos conditioned on the edited image. Videoshop produces higher quality edits against 6 baselines on 2 editing benchmarks using 10 evaluation metrics.

@misc{fan2024videoshop,

title={Videoshop: Localized Semantic Video Editing with Noise-Extrapolated Diffusion Inversion},

author={Xiang Fan and Anand Bhattad and Ranjay Krishna},

year={2024},

eprint={2403.14617},

archivePrefix={arXiv},

primaryClass={cs.CV}

}